Probabilistic Data Structures

When dealing with massive datasets, perfect precision often becomes a bottleneck. This series explores probabilistic data structures, which are elegant algorithms that trade a tiny margin of error for massive gains in memory efficiency and processing speed.

Articles

Data Structures for Large-Scale Systems

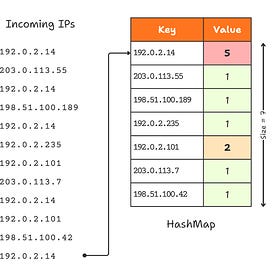

Imagine you’ve just deployed a new microservice, a simple API gateway, and initially, traffic is pretty manageable, maybe a few requests per second. And you’re logging every IP address to track unique visitors and block malicious spammers.

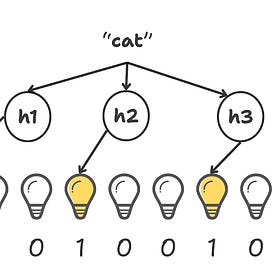

Probabilistic Data Structures: The Bloom Filter Deep Dive

In the last post, we hit the Memory Wall. We realised that storing millions of exact keys in RAM isn’t just expensive, it’s a recipe for crashing your pods and burning money. We introduced the concept of Probabilistic Data Structures that trade a tiny bit of accuracy for a massive gain in efficiency.

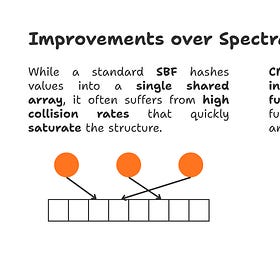

The Counting Tax: Trading Exact Numbers for Infinite Scale

You are managing a core router processing 10 million packets per second. To keep the network healthy, you must defend against traffic floods designed to slow processing. These threats generally arrive in two distinct flavours:

The Count-Min Sketch Deep Dive

In our previous post, we explored the reality of scale: tracking massive data streams using traditional HashMaps quickly exhausts main memory and introduces crippling disk I/O latency.

Code

The above posts are accompanied with implementations of the concepts discussed. Click below if you are interested to know more!

More Coming Soon